Scenario-Based Testing: How to Write Realistic Task Scenarios

Scenario-based testing is one of the best ways to find defects that slip past traditional test case structures, but most teams approach it entirely wrong. They write scenarios that sound reasonable in a conference room but fall apart the moment a real user tries to accomplish something. The gap between a good scenario and a throwaway checklist item determines whether your testing actually catches the problems that cost users patience—or money.

This isn’t about following a template. It’s about understanding how real people think, what they actually want to accomplish, and where systems fail in the space between intention and execution. This requires abandoning the habit of testing features in isolation and instead forcing your scenarios to live in the messy reality of actual user behavior.

Understanding Scenario-Based Testing

Scenario-based testing evaluates a system by placing it in the context of a complete user journey rather than examining individual functions separately. Instead of verifying that a button accepts a click and a form accepts input, you construct a narrative: the user arrives with a specific goal, navigates through multiple steps, encounters complications, and either succeeds or fails in ways that reveal genuine system weaknesses.

This approach became more popular in the early 2000s, particularly after Cem Kaner presented his work on scenario testing at the Quality Engineering Conference. Kaner argued that traditional test cases—what he called “checklists of verification”—often missed defects that emerged from realistic combinations of features. His position was controversial at the time because it challenged the dominant paradigm of detailed, step-by-step test cases. Today, most experienced testing organizations recognize that both approaches have value, but the industry still struggles with execution.

The main advantage of scenario-based testing is its ability to expose integration failures and workflow gaps. A feature might work perfectly in isolation—a payment processor validates a card correctly, an inventory system updates in real time, a notification system dispatches messages successfully—but collapse when combined with realistic user behavior: the user abandons the payment page when inventory appears unavailable, the notification arrives before the inventory update completes, the system enters an inconsistent state that no single test case would catch.

The Anatomy of a Realistic Task Scenario

A genuinely useful scenario contains several critical elements that distinguish it from a simple test case or user story. Understanding these components allows you to construct scenarios that actually stress your system rather than merely exercise it.

The first element involves a believable user context. Your scenario must establish who the user is, what their technical sophistication level appears to be, and what constraints they operate under. A scenario describing “a user who needs to export their data” differs significantly from “a compliance officer at a mid-sized hospital who must generate monthly reports for three different regulatory bodies before the 15th of each month, using only the Internet Explorer browser that IT has mandated for all systems.” The second version contains constraints that will actually expose problems—the first version could describe almost anyone.

The second element involves genuine motivation and stakes. Users don’t interact with systems for no reason; they have problems to solve and consequences for failure. Your scenario should make those stakes visible. When a scenario includes meaningful consequences for failure—deadlines, financial penalties, reputational risk, customer dissatisfaction—you’re more likely to surface defects that matter rather than trivial interface inconsistencies.

The third element requires authentic complexity. Real users take unexpected paths. They double-back, abandon and resume tasks, switch between devices, get interrupted, make mistakes and attempt recovery. A scenario that follows the happy path from start to finish isn’t a scenario—it’s a scripted walkthrough. Realistic scenarios include detours, errors, and complications that mirror actual user behavior.

Writing Scenarios That Uncover Real Problems

The transition from understanding what makes a good scenario to constructing one requires practice and a deliberate shift in thinking. Most testers, when asked to write scenarios, default to describing system behavior rather than user experience. They write “the system should validate the input and display an error message” rather than “the user enters an invalid email address because they mistyped it while rushing to complete the form before their child interrupted them, and they should receive a clear error within two seconds without losing the data they’ve already entered.”

The first version tests a requirement. The second version tests whether the system handles a realistic failure mode gracefully. Both have value, but only the second approach tends to surface the issues that cause users to abandon tasks or contact support.

When constructing scenarios, start with a specific user goal and work backward through the steps required to achieve it—but include the complications that actually occur in practice. Consider the user’s emotional state, time pressure, technical constraints, and prior knowledge. A user who has never seen your interface before behaves differently than an experienced power user. A user who is frustrated from previous failed attempts behaves differently than one approaching the task optimistically.

For example, consider a scenario for an e-commerce checkout flow. A weak version might read: “User adds items to cart, enters shipping information, completes payment, and receives confirmation.” This scenario would likely pass testing while the checkout flow still contains significant defects. A stronger version might read: “A customer in a rural area with unreliable cellular connectivity adds items to their cart over the course of three sessions spread across two days. During checkout, their connection drops mid-process. They return to find their cart intact but the saved payment method has expired. They attempt to re-enter payment information but realize they’ve forgotten which email address they used for their PayPal account. They switch to credit card payment, encounter a validation error because their billing address doesn’t match their shipping address exactly, and abandon the purchase.”

This scenario would likely expose several integration and error-handling issues that the simpler version would miss entirely.

Scenario Construction Techniques That Work

Several established techniques can help you generate scenarios that actually uncover defects rather than merely confirming that expected functionality works as specified.

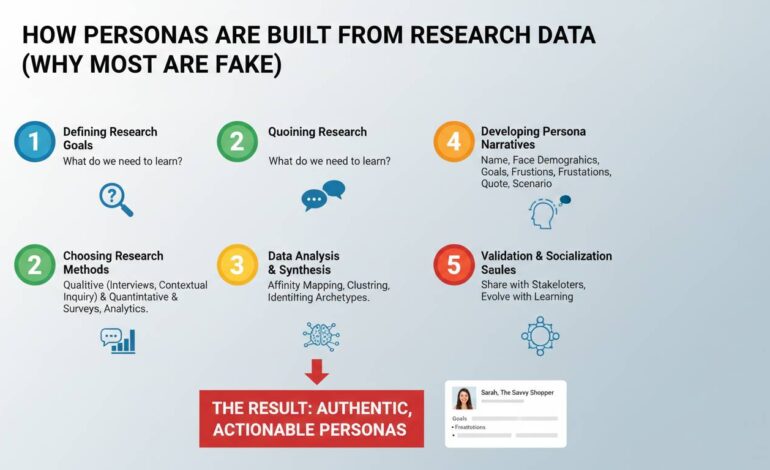

The persona-based approach involves creating detailed user personas with distinct characteristics, then forcing your scenarios to accommodate their specific constraints. Rather than generic users with undefined capabilities, your personas might include a visually impaired user relying on screen readers, a non-native speaker attempting to interpret error messages, a user with motor impairments who navigates exclusively via keyboard, or an elderly user who is uncomfortable with technology and proceeds cautiously. Each persona exposes different failure modes in your system.

The journey mapping technique involves mapping the complete end-to-end experience across multiple touchpoints, not just within your immediate application. If you’re testing a mobile banking app, the scenario doesn’t begin when the user opens the app—it begins when they realize they need to transfer money, includes their decision process for which device to use, incorporates their previous experience with the app, and extends through confirmation that the transfer succeeded. This broader view surfaces defects that occur in the transitions between systems or steps.

The edge case immersion technique deliberately places your scenario in the worst possible circumstances. Network latency, expired sessions, concurrent modifications by other users, system timeouts, third-party service failures—these conditions define the difference between testing that passes in controlled environments and testing that survives production. Your scenarios should specifically include these conditions rather than assuming they’ll resolve themselves or never occur simultaneously.

Common Mistakes That Undermine Scenario Effectiveness

Despite widespread acknowledgment of scenario-based testing’s value, several persistent mistakes prevent teams from realizing its full benefits. Recognizing these patterns allows you to avoid them in your own practice.

The most damaging mistake involves treating scenarios as test cases with narrative wrappers. When teams simply take their existing test cases and rewrite them in paragraph form, they gain nothing. The scenarios still test individual features in isolation, still follow the happy path, and still fail to expose integration problems. The narrative format provides an illusion of thoroughness without actual substance.

Another frequent error involves creating scenarios that are too complex to execute consistently. Some teams, in their enthusiasm for realistic scenarios, construct elaborate multi-step narratives that require significant setup, specific environmental conditions, and careful orchestration to reproduce. While these scenarios may occasionally surface important defects, they’re rarely run consistently because they’re too difficult to execute. The most valuable scenarios balance realism with repeatability—they describe realistic situations that testers can reproduce without elaborate preparation.

The third mistake involves scenario authorship by committee. When multiple stakeholders contribute to scenario creation without clear ownership or criteria, the resulting scenarios often lack coherence or focus. They become collections of interesting situations rather than targeted tests of specific system weaknesses. Assigning clear ownership—someone responsible for ensuring each scenario has a purpose and tests something specific—prevents this drift.

Structuring Scenario Documentation

How you document scenarios significantly impacts their usefulness over time. Scenarios that require tribal knowledge to execute or contain implicit assumptions about context become worthless as team membership changes.

Each scenario should include a clear identifier, a descriptive title that communicates its purpose, the specific user goal being tested, the preconditions required for execution, the step-by-step actions to perform, and the expected outcomes. Beyond these basics, effective scenario documentation includes the reason this scenario exists—why does this particular user journey matter enough to test? What defect or risk does it address?

The environmental context matters as well. A scenario that assumes a specific browser, network condition, device type, or account state should document those requirements explicitly. Many teams maintain scenario libraries organized by user goal, system area, or risk category, allowing testers to select relevant scenarios based on the changes being validated.

The documentation should also capture the results of previous executions. When a scenario consistently passes, it may indicate redundant testing that could be reduced. When a scenario consistently fails, it may indicate either a known defect that hasn’t been fixed or a scenario that’s too strict. Over time, this data allows you to refine your scenario library toward maximum defect detection efficiency.

Integrating Scenarios Into Broader Testing Strategies

Scenario-based testing doesn’t exist in isolation—it works most effectively as part of a layered testing strategy that includes unit testing, integration testing, and traditional functional testing at appropriate levels. The scenarios you execute manually often represent the most expensive form of testing in terms of time and effort, so reserving them for the situations where they provide unique value makes economic sense.

Unit and integration tests should handle the predictable, repeatable verifications: does this function return the correct output given this input? Does this component communicate correctly with that component? These tests can run automatically and frequently, providing rapid feedback about basic correctness.

Manual scenario testing then focuses on the questions that automated tests struggle to answer: does this combination of features work in a way that makes sense to users? Do these error messages help users recover from problems? Does this workflow feel intuitive even under adverse conditions?

The division between what gets automated and what remains manual should be deliberate rather than accidental. Many teams automate scenarios that would be better left manual while manually executing scenarios that could easily be automated, resulting in inefficient testing that provides less confidence than a more thoughtful approach would deliver.

When Scenario-Based Testing Falls Short

Honest assessment requires acknowledging the limitations of any testing approach, and scenario-based testing has specific weaknesses that teams should understand.

Scenario-based testing is inherently subjective. Different testers executing the same scenario may observe different results based on their interpretation of ambiguous instructions, their assumptions about user behavior, or their tolerance for different types of problems. This subjectivity can be reduced through clear documentation and consistent criteria but cannot be eliminated entirely.

The coverage provided by scenarios is also difficult to measure. With traditional test cases, you can calculate statement coverage, branch coverage, or decision coverage to quantify how much of the system you’ve tested. With scenarios, coverage remains qualitative—you’ve tested important user journeys, but it’s difficult to demonstrate that you’ve tested enough of them or that you’ve identified the most important ones.

Finally, scenario-based testing requires significant domain knowledge and user empathy to execute well. Junior testers or those unfamiliar with the target users often struggle to construct meaningful scenarios or to recognize when a scenario has uncovered a genuine problem versus a minor inconsistency. This expertise requirement limits scalability—you cannot easily expand scenario-based testing by adding more testers without also investing in their training and mentorship.

Building Your Scenario Development Practice

Improving your scenario-based testing requires deliberate practice and continuous refinement. Start by examining your existing scenario library with a critical eye: How many of your scenarios would actually catch a serious production defect versus simply confirming that expected functionality works? How many involve realistic complications versus happy-path navigation?

Generate new scenarios by studying actual user behavior data: support tickets, usability testing recordings, analytics showing where users abandon tasks, and customer feedback. These real-world observations provide raw material for scenarios that test the situations where users actually struggle rather than the situations that seem problematic in theory.

Execute your scenarios consistently and track the results over time. Look for patterns: which types of scenarios consistently surface defects? Which consistently pass with no issues? This data allows you to refine your scenario library toward maximum effectiveness.

Finally, share your scenarios and their results with the broader team. Scenarios that expose defects become stories about user problems that need solving. Scenarios that pass become documentation of system behavior. In both cases, the scenario library becomes a shared asset that improves as more people contribute to its development and refinement.